AI Platform Shifts Operators Should Track This Quarter (and What They Change in Ops)

Operational friction you can feel before you can name it

Most operators aren’t struggling to “adopt AI.” They’re struggling to keep work moving when the surface area of requests expands faster than the operating system underneath it. Intake grows. Exceptions multiply. Clients expect faster turnaround. Internal teams ask for more visibility. And the underlying question becomes: who owns the first response, the handoff, the follow-up, and the audit trail?

This quarter’s platform movement makes that pressure more visible because new capabilities are landing in places operators actually touch: enterprise agent frameworks, voice interfaces, and models tuned for complex reasoning and longer workflows. That’s not a novelty layer; it changes what work can be handled end-to-end without adding headcount.

But the friction remains operational: approvals, policy, data boundaries, and making sure outputs are reliable enough to enter real workflows. If you’ve experimented with pilots and found that things stall after the demo, it’s usually not because the model “wasn’t smart enough.” It’s because no one established defined responsibilities and operational ownership for the work you hoped AI employees would perform—especially when you’re talking about replacing repeatable roles, not sprinkling automation onto tasks.

Why the common approach fails

The common approach is still shaped like a technology project: pick a platform, run a proof of concept, integrate a few endpoints, and ask a team to “use it.” That can create activity without creating capacity. Operators end up with a new queue—prompt requests, review requests, “can you check this output” requests—without any redesign of role boundaries.

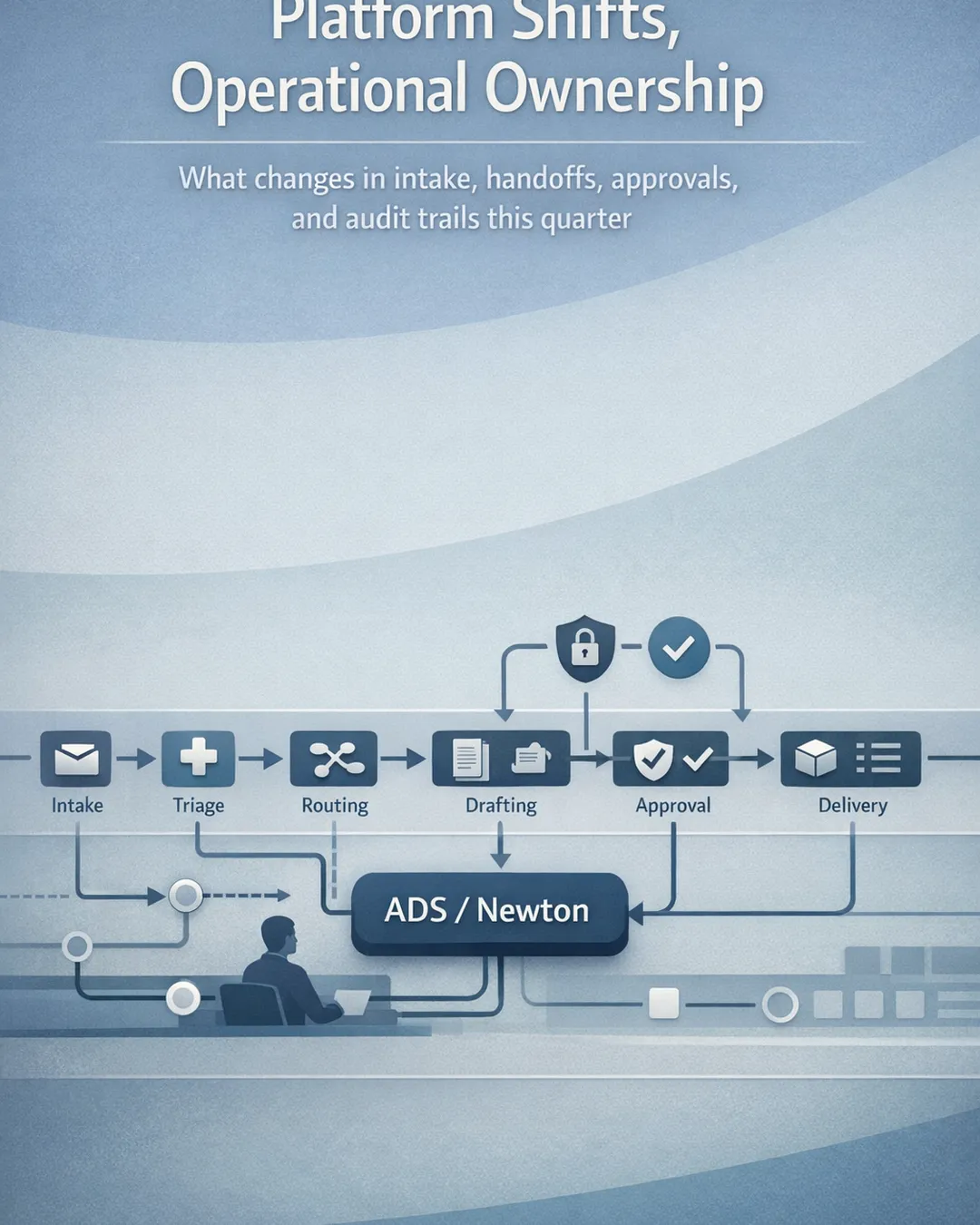

A second failure mode shows up when companies treat new platform announcements as feature checklists. Voice is available, so someone prototypes voice. Enterprise agents are announced, so a team builds a few agents. A new model ships, so people swap model IDs and expect workflow outcomes to improve. The problem is that real operations don’t break down into model calls. They break down into responsibilities: triage, data collection, drafting, approvals, escalation, and closure—plus reporting and compliance.

This quarter’s headlines reinforce that pattern if you’re not careful. TechCrunch reports Anthropic’s push for enterprise agents with plugins aimed at finance, engineering, and design—an indicator that vendors are packaging more “agent-shaped” building blocks for business teams. xAI’s Voice API positions voice agents that can speak, think, and act—meaning conversations can become operational interfaces, not just customer service experiments. Google’s Gemini 3.1 Pro announcement emphasizes capability for complex tasks, and Google Cloud’s notes on Gemini 3.1 Pro across Gemini CLI, Gemini Enterprise, and Vertex AI highlight distribution into enterprise workflows, not just labs.

Each of these shifts makes it easier to *do* something. None of them automatically answers: who owns the work, what is the approval gate, where is the audit trail, and how do we ensure tenant isolation and policy compliance? Without those answers, AI employees become a layer of task execution that operators must babysit—rather than a system for replacing repeatable roles with defined responsibilities and operational ownership.

Reframe: role ownership vs task execution

The more useful way to read platform movement is not “what can the model do,” but “what role boundary can we now assign to AI employees with confidence?”

When platforms add enterprise agent packaging (like the Anthropic enterprise agent push), it signals maturation around composability and domain integration. That matters operationally only if you translate it into a role: for example, “first-draft financial variance narrative owner” or “engineering change-log summarization owner,” with defined responsibilities, inputs, outputs, and escalation rules. Otherwise it stays as a toolbox.

When voice interfaces mature (as suggested by xAI’s Voice API), the operational question is: where does voice reduce friction without weakening controls? Voice can be a front door for intake and status checks, but it can also create ambiguity if you don’t specify what counts as a request, what gets logged, and what requires written confirmation. If you treat voice as a novelty channel, you get inconsistent intake. If you treat voice as a role interface—“intake coordinator, voice-enabled”—you can require structured capture, confirmation steps, and routing.

When models improve for complex tasks and appear across enterprise surfaces (as with Gemini 3.1 Pro in Gemini Enterprise and Vertex AI), the shift is not raw intelligence. It’s feasibility of longer, multi-step workflows that previously fell apart midstream: synthesizing multi-document context, reconciling contradictory notes, or producing drafts that are closer to client-ready. The operational move is to assign those workflows to AI employees as replacing repeatable roles, with operational ownership and approval gates, rather than expecting individuals to “use the model” ad hoc.

This reframe also clarifies governance. “Task execution” governance is about restricting usage. “Role ownership” governance is about specifying: what the role is allowed to do, what it is not allowed to do, what it must log, and who signs off. That’s how you make AI employees production-safe in professional services environments where accuracy and defensibility matter.

Practical implications operators can act on this quarter

Start with two role maps: one for customer-facing work and one for internal operations. Highlight the roles where output variability is tolerated only up to an approval gate. Those are prime candidates for AI employees replacing repeatable roles with defined responsibilities.

For an Operations Leader, the immediate opportunity is usually intake-to-resolution flow: triage, request clarification, and routing. Treat voice and agent frameworks as interface options, not strategy. Set a policy that every request—whether typed or spoken—becomes a structured record with category, priority, required artifacts, and owner. Then assign AI employees operational ownership for “first response and clarification,” with escalation thresholds. This reduces the “nobody owns first response” failure pattern without asking senior staff to live in the queue.

For a Technology Director, the quarter’s platform shifts raise a different question: how do we keep experimentation from creating brittle, self-managed stacks that only one person understands? If you want AI employees to be part of your operating model, you need runtime consistency: tenant isolation, approval gating, logging, and safe data handling. The model layer will keep changing—new releases, new endpoints, new deployment surfaces. Your operational design should assume that swap-outs will happen and that the role definition stays stable.

A practical test: can you describe each AI employee in a one-page “role card” with defined responsibilities, inputs, outputs, approvals, and exception handling? If not, you’re still in task execution mode. Another test: can you produce an audit trail for any output that touched a client deliverable? If not, you’re not production-safe, regardless of how advanced the platform is.

Finally, set a quarterly platform review cadence that is explicitly operational: “What new capability allows us to replace repeatable roles we previously couldn’t?” and “What changes our control design?” Not “what should we try,” but “what can we own.”

Where Agentic Desk Solutions fits

Agentic Desk Solutions helps operators move from pilots to operational ownership by deploying AI employees as a managed, hosted, approval-gated, tenant-isolated workforce model (ADS/Newton). Instead of asking your team to assemble and maintain a self-managed stack, we focus on production-safe role deployment: defined responsibilities, approval gates, logging, and escalation paths that match professional services realities. We also support client workflows without local runtime setup, so teams can adopt role-based AI employees without turning every workflow into an internal platform project. If you’re tracking this quarter’s platform shifts and want a calm, operational path to replacing repeatable roles, we can map the first two roles and the controls around them in a working session.

Sources

- Anthropic launches new push for enterprise agents with ... — https://techcrunch.com/2026/02/24/anthropic-launches-new-push-for-enterprise-agents-with-plugins-for-finance-engineering-and-design

- Voice API: Build Voice Agents That Speak, Think, and Act — https://x.ai/api/voice

- Gemini 3.1 Pro: A smarter model for your most complex tasks — https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-1-pro

- Gemini 3.1 Pro on Gemini CLI, Gemini Enterprise, and ... — https://cloud.google.com/blog/products/ai-machine-learning/gemini-3-1-pro-on-gemini-cli-gemini-enterprise-and-vertex-ai